vSphere with Tanzu High Availability with vSphere Zones

Reliability – one of the most important characteristics when providing IT services to others, would it be a customer, a developer, or another operator. This of course also applies to your vSphere with Tanzu installation, as it provides base infrastructure for your Kubernetes platform. This is why the Supervisor Cluster consists of three Supervisor Control Plane VMs, spread across three different ESXi hosts. Thus, if one fails, there won’t be any impact.

But what if there is a site failure? Maybe a power outage or a major network incident? Not only your Supervisor Cluster would go down, but all Tanzu Kubernetes Cluster with it. So far, there was nothing you could do about this (from platform perspective).

But now, with vSphere 8 there is a new feature called “vSphere Zones”. You can now define different zones in vSphere, each mapped to a vSphere Cluster, and spread your Supervisor Cluster installation across them.

Create vSphere Zones

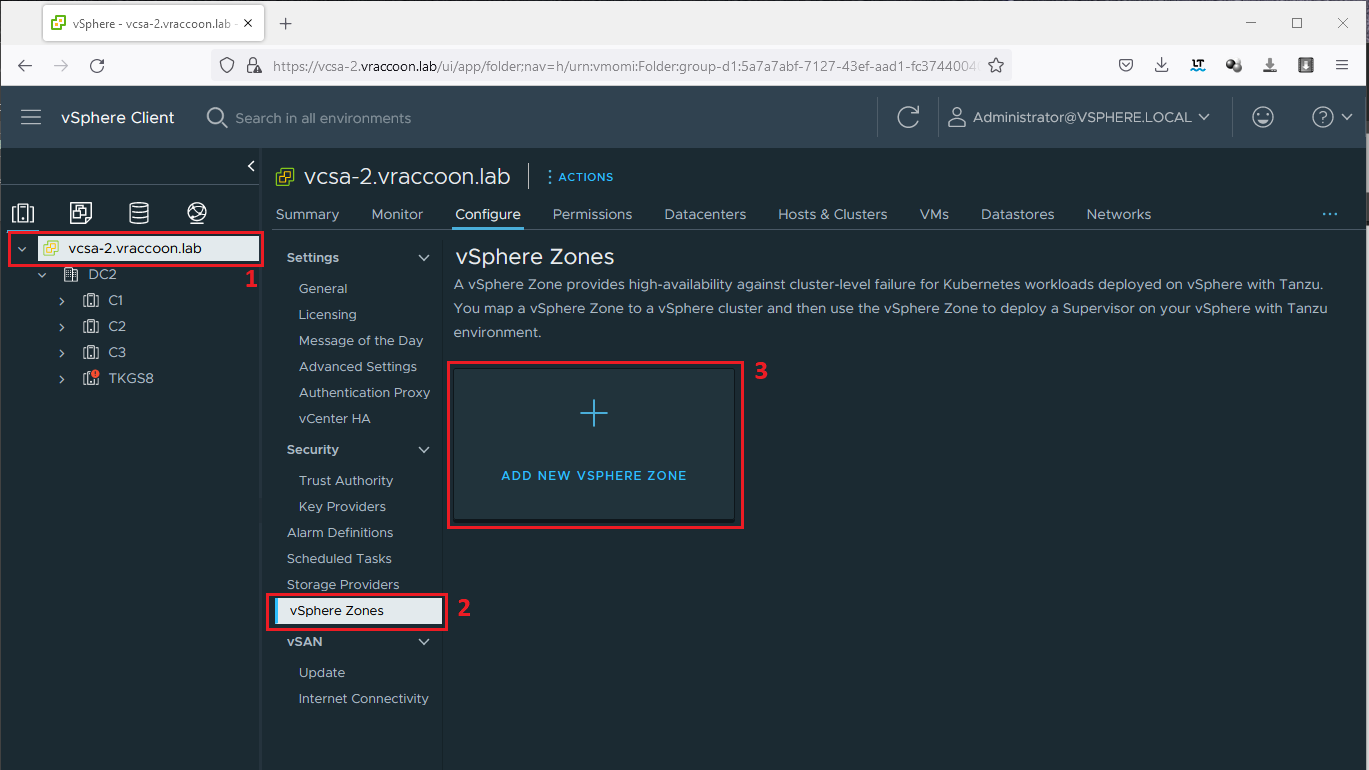

To create vSphere Zones, navigate to your vCenter Object (1) –> vSphere Zones (3) –> ADD NEW VSPHERE ZONE (3)

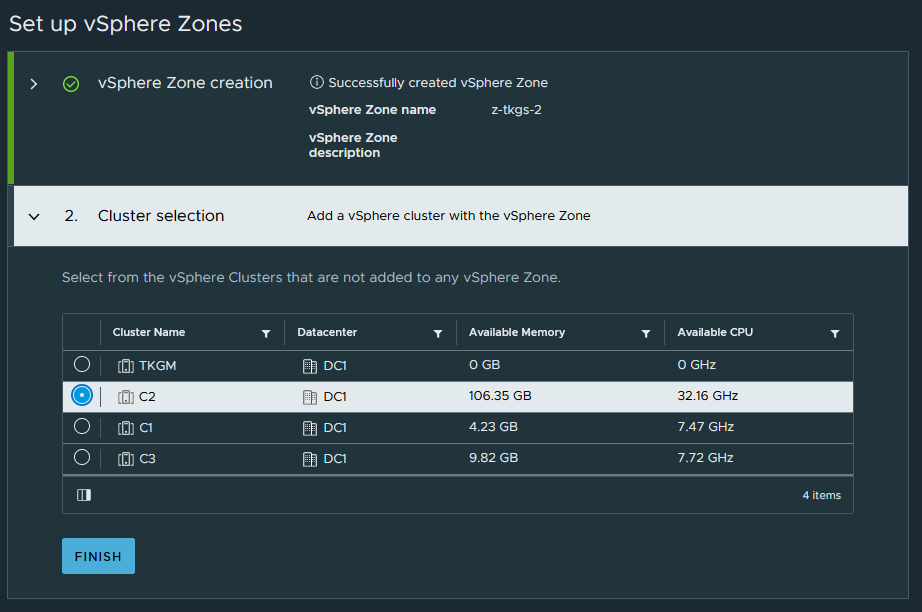

In the following Wizard, give your zone a name. Please mind, that the name must follow DNS conventions. In the second step, assign a Cluster to it.

Create Storage Policy

We need to create a vSphere Storage Policy to identify the datastores to be used for our Supervisor Cluster Control Plane VMs. So far nothing new. Just keep in mind, that this Storage Policy must have a compatible Datastore in each of the vSphere Zones.

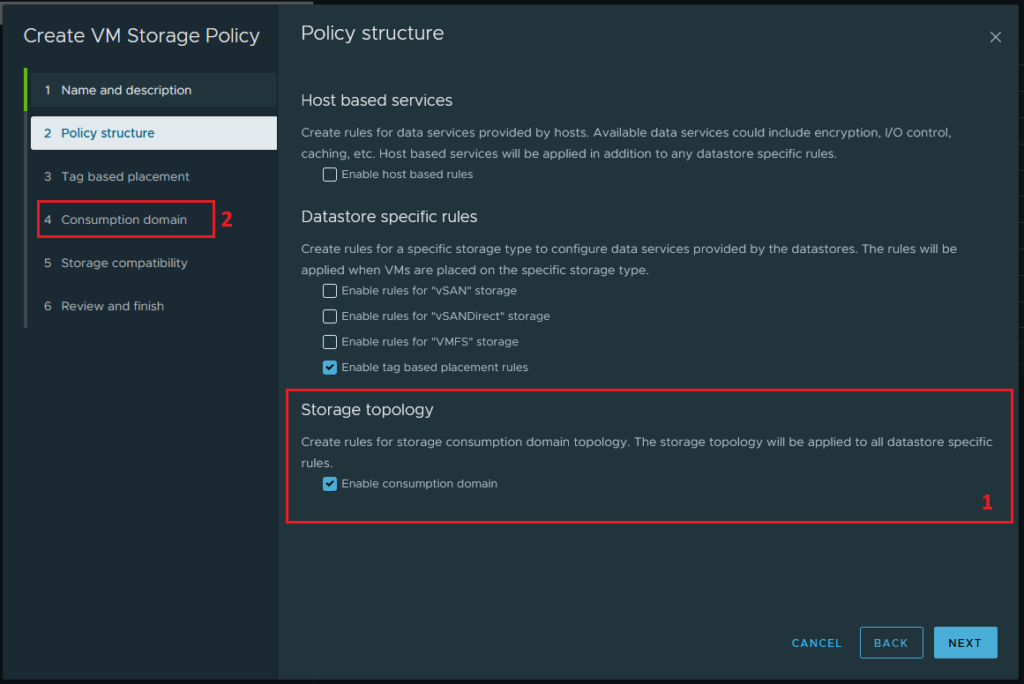

There is one new thing though – when creating the Storage Policy, there is a new option, called Storage Topology (1). Here we need to check Enable Consumption Domain. This brings a new step in the wizard called Consumption domain (2). If you forget this part, you’ll get an error message later during the tanzu wizard.

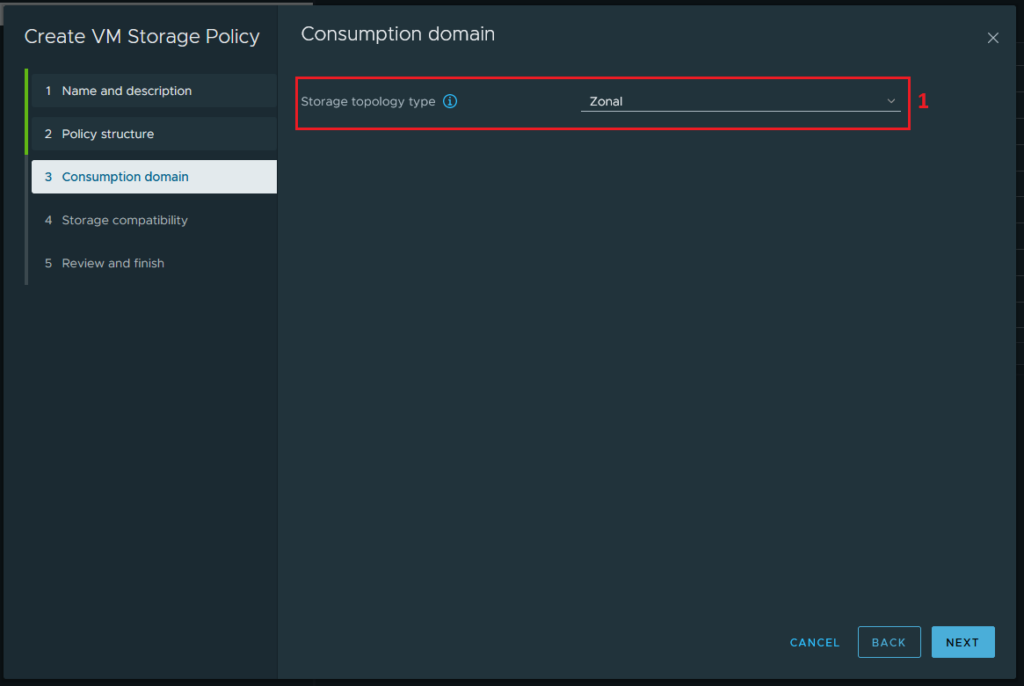

During this step select Zonal (2).

Supervisor Cluster Installation (snippet)

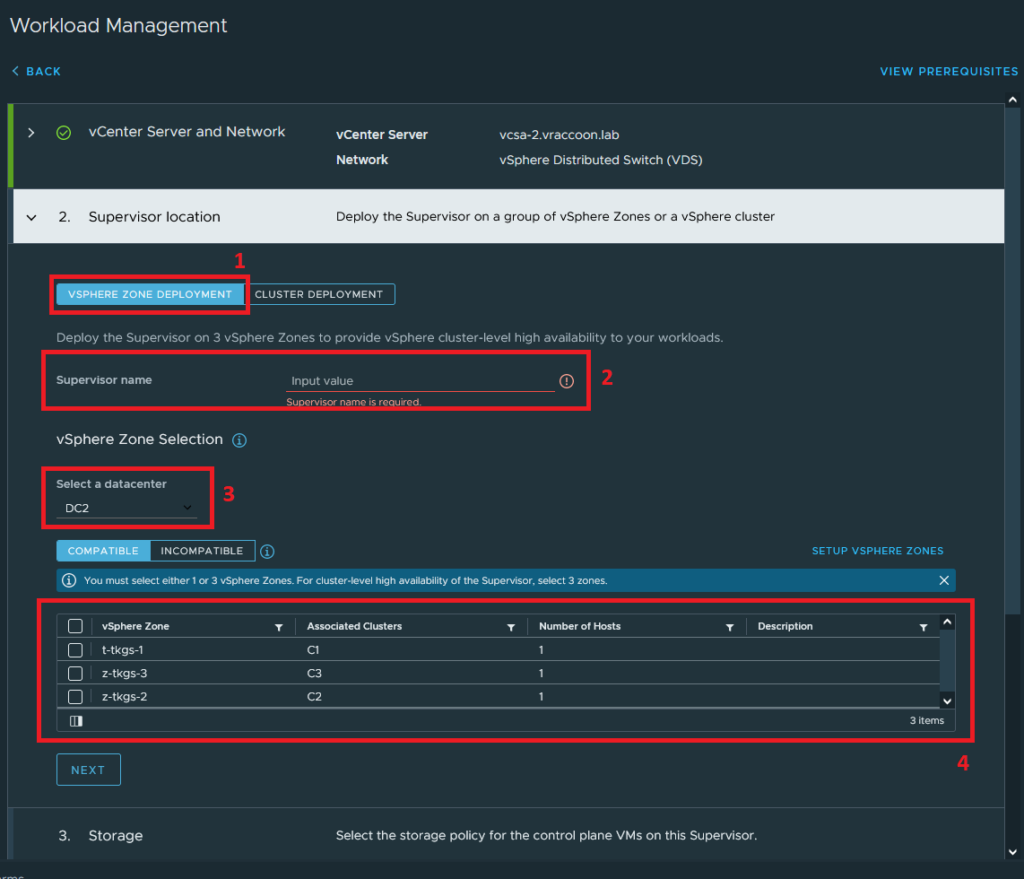

During the tanzu installation wizard, at step 2 Supervisor location, you got to decide whether tp provision your Supervisor Control Plane VMs traditionally within one vSphere cluster or into multiple vSphere Zones.

Select VSPHERE ZONE DEPLOYMENT (1), give it a Supervisor name (2), Select the Datacenter (3), and select your three vSphere Zones (4).

If you don’t see your desired network, it probably means, that not all hosts across all vSphere Zones have access to it. You see only networks, which are present on all ESXi hosts.

Create Tanzu Kubernetes Cluster (TKC)

Creating the TKC is pretty much the same as it was before. Except the nodepools have a new parameter you could set, called “failureDomain” (see line 18 below). This parameter is now mandatory for the nodepools and expects one of the three vSphere Zone names.

The controller in turn, get spread equally across the configured vSphere Zones.

apiVersion: run.tanzu.vmware.com/v1alpha3

kind: TanzuKubernetesCluster

metadata:

name: tkc-backend

namespace: sns-backend

spec:

topology:

controlPlane:

replicas: 3

vmClass: best-effort-small

storageClass: sp-tkc

tkr:

reference:

name: v1.21.6---vmware.1-tkg.1.b3d708a

nodePools:

- name: np-1

failureDomain: t-tkgs-2

replicas: 2

vmClass: best-effort-small

storageClass: sp-tkc

tkr:

reference:

name: v1.21.6---vmware.1-tkg.1.b3d708a

settings:

storage:

classes: [sp-tkc]

defaultClass: sp-tkc

network:

cni:

name: antrea

pods:

cidrBlocks: [192.168.224.0/20]

services:

cidrBlocks: [192.168.240.0/20]

Additional Notes

Apparently, neither SupervisorControlePlaneVMs nor TKC Nodes (Controller/Worker) will be recreated in any other vSphere Zone if one zone fails completely.