Tanzu Kubernetes Grid Cluster vs vSphere native Pods

The by far biggest headline on vSphere (7) was the support for running containers natively on ESXi Hosts, using the newly introduced Supervisor Cluster, including the ESXi Spherelet daemon. But this, being impressive as it is, is not the only option you have to run containers inside your vSphere environment. The alternative is the Tanzu Kubernetes Cluster, which is a guest cluster, deployed fully automated by your supervisor cluster.

But why would you do such a thing? Isn’t that a bit Inception-like? Let’s take a closer look at both options.

Option 1: Supervisor Cluster (vSphere pods)

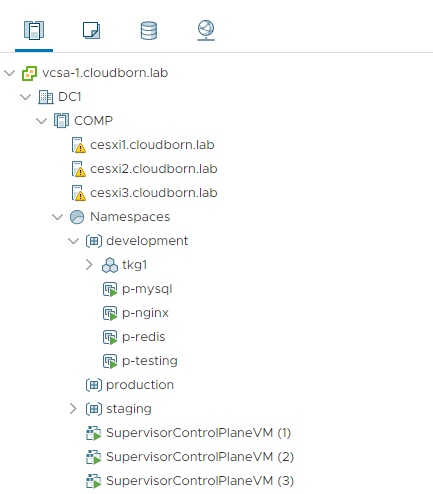

The Supervisor Cluster is the vSphere Cluster, you haven enabled Workload Management on. With this step, three SupervisorControlPlane-VMs are configured, serving as Kubernetes Masters and the ESXi hosts inside the cluster are configured to run container workloads. Furthermore, these hosts are prepared with NSX-T, as it acts as K8s CNI for vsphere native pod deployments, providing (and automating!) all the network features from as low as L2 networks, up to ingress/egress control.

Deploying Pods on ESXi is incredible fast. According to VMware 30% faster than traditional K8s deployments (Workers inside VMs) and even 8% faster than bare metal K8s installations. Turned out, ESXi can handle resources much better than a typical Linux installation.

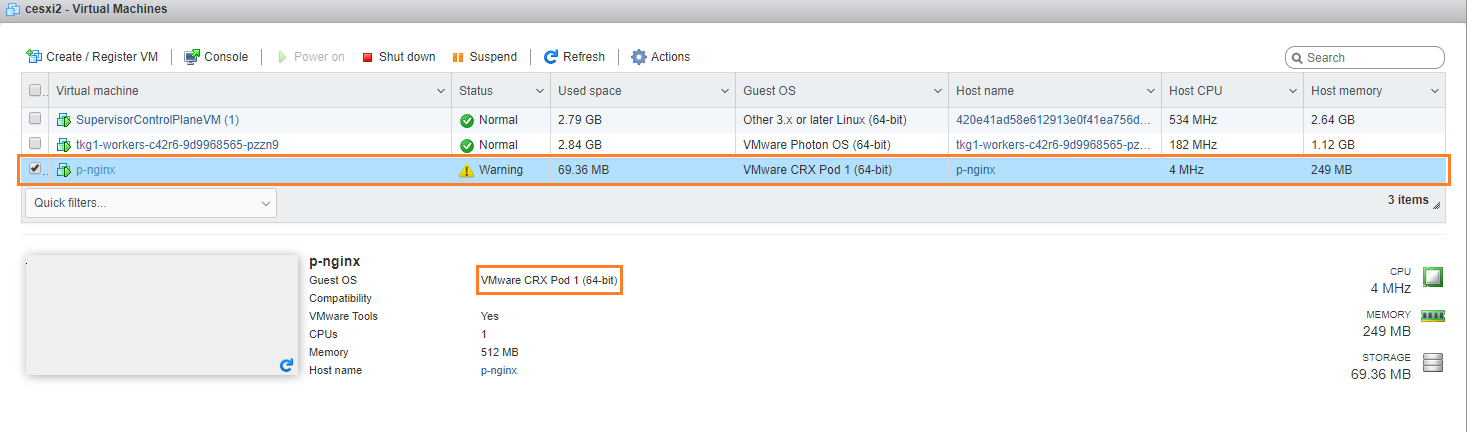

But technically, these containers are not running natively on ESXi. Instead, they are running in a construct called CRX, which is an extremely highly specialized VM.

One would think, that this should be slower, but the figures are proving this guess wrong 🙂 Also, the fact that this is a VM, inherits much higher security isolation between vSphere Pods than between containers between each other (not saying the latter is bad, just comparing). Also deploying Pods directly onto ESXi removes the need of another layer in between (like K8s VMs), which in turn reduces complexity and eases troubleshooting.

The drawback with this solution though, you have no root access. The Supervisor Cluster provides a very solid solution. VMware has put a lot of thought into it, but in the end, it is still a highly opinionated design. As long as you share the same opinion, that is a good thing. But if you want to deviate from it, your options are very little (because of governance, compliance or just picky users =P).

And this is where the Tanzu Kubernetes Cluster comes into play.

Option 2: Tanzu Kubernetes Cluster

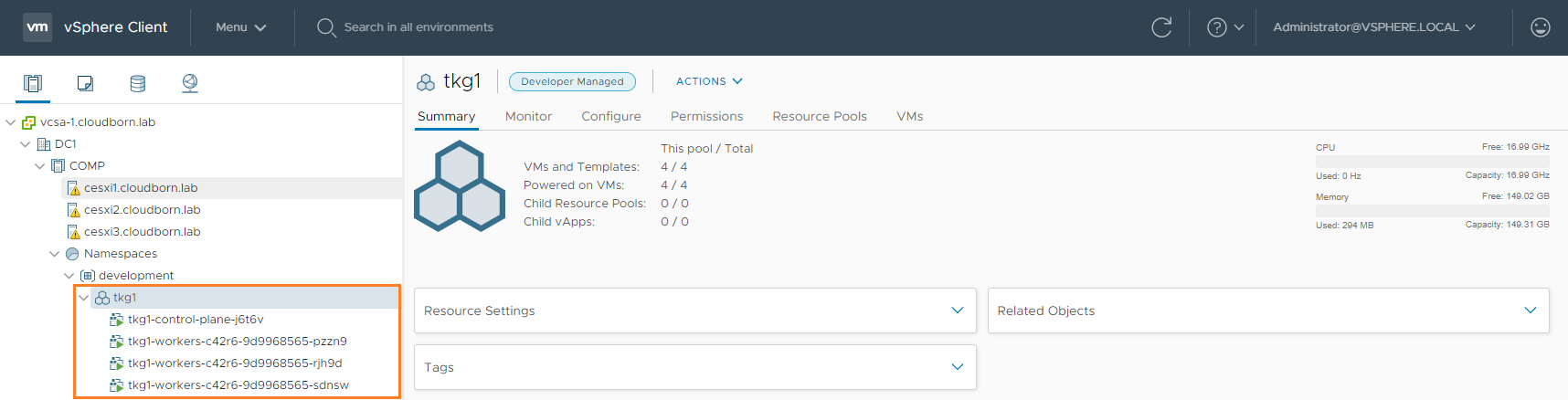

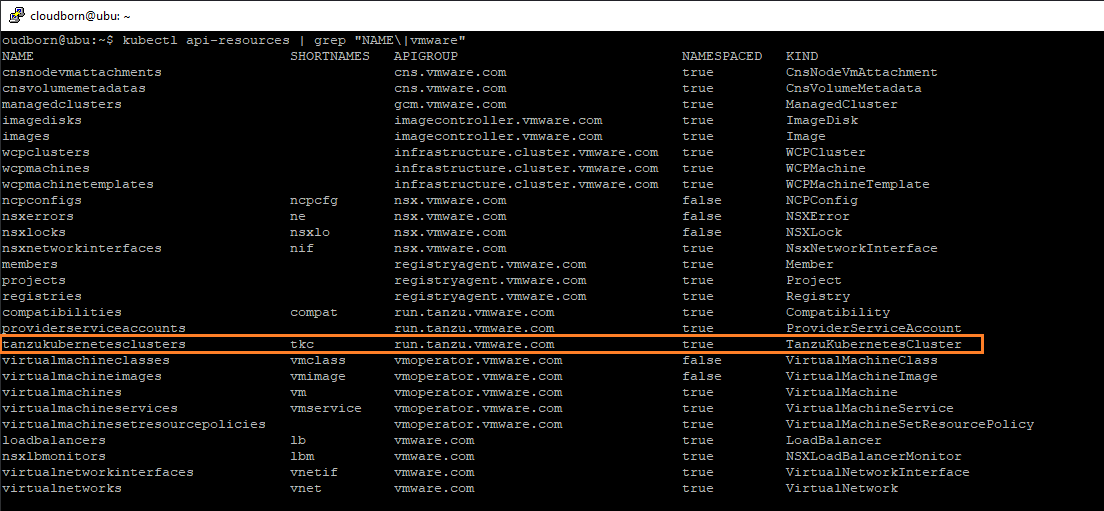

The Tanzu Kubernetes Cluster is a Kubernetes Cluster (Duh!) running as VMs inside your vSphere Supervisor Cluster. The beauty is, that this Guest Cluster is provisioned completely automated through the SupervisorCluster (using Cluster API). Besides default Kubernetes resources, the SupervisorCluster comes also with a bunch of new CRDs, one of which being the Tanzu Kubernetes Cluster.

If you deploy such a cluster, it requires prepared OVA/OVF templates in a Content library. These templates can either be acquired by the official repository or you can create them yourself.

Thus, you will have root access and can do (almost) everything you want to, like upgrading to latest and greatest K8s, set config flags no one ever heard before, install even more CRDs, install custom operators, have full cluster level access,…

If you have a CI/CD Pipeline, which requires short-living K8s Clusters, this is a great option to use.

Conclusion

The Supervisor Cluster brings out of the box, a very strong, highly performant, simple to use Kubernetes Clusters, but opinionated.

The Tanzu Kubernetes Clusters, on the other hand, brings the freedom and flexibility to shape the cluster precisely the way you need it, requiring more effort.

The big question though, are the additional features worth the additional effort?

Btw – no need to decide upfront, you gonna get both options anyway 😉

P.S.: I wonder if you could install kube-virt inside the guest cluster, and start all over – to get the real inception feeling oO